Publication

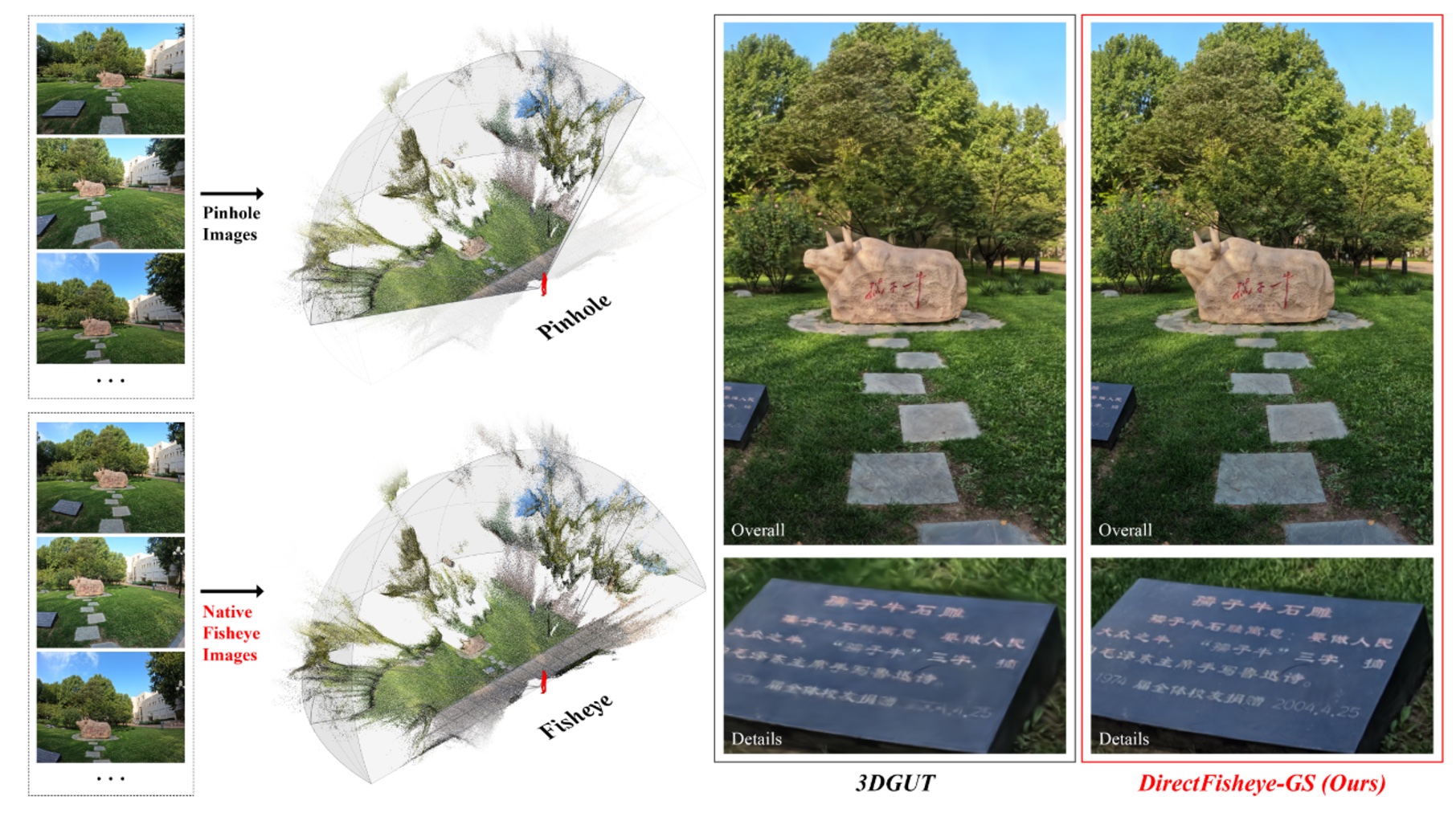

DirectFisheye-GS: Enabling Native Fisheye Input in Gaussian Splatting with Cross-View Joint Optimization

Zhengxian Yang*, Fei Xie*, Xutao Xue, Rui Zhang, Taicheng Huang, Yang Liu, Mengqi Ji, Tao Yu✝

CVPR 2026 [Highlight]

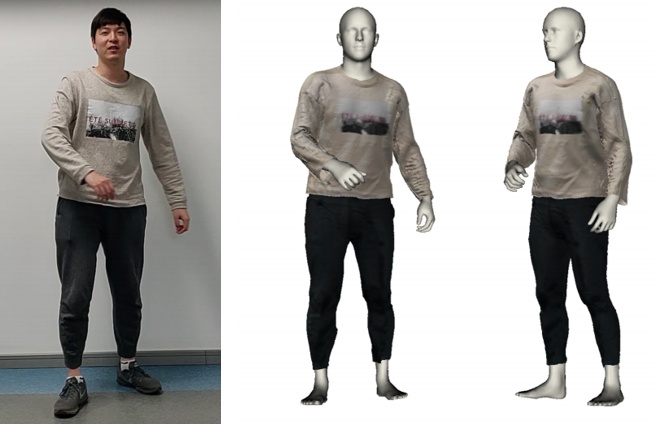

MetricHMSR: Metric Human Mesh and Scene Recovery from Monocular Images

Chentao Song*, He Zhang*, Haolei Yuan, Haozhe Lin, Jianhua Tao, Hongwen Zhang✝, Tao Yu✝

CVPR 2026

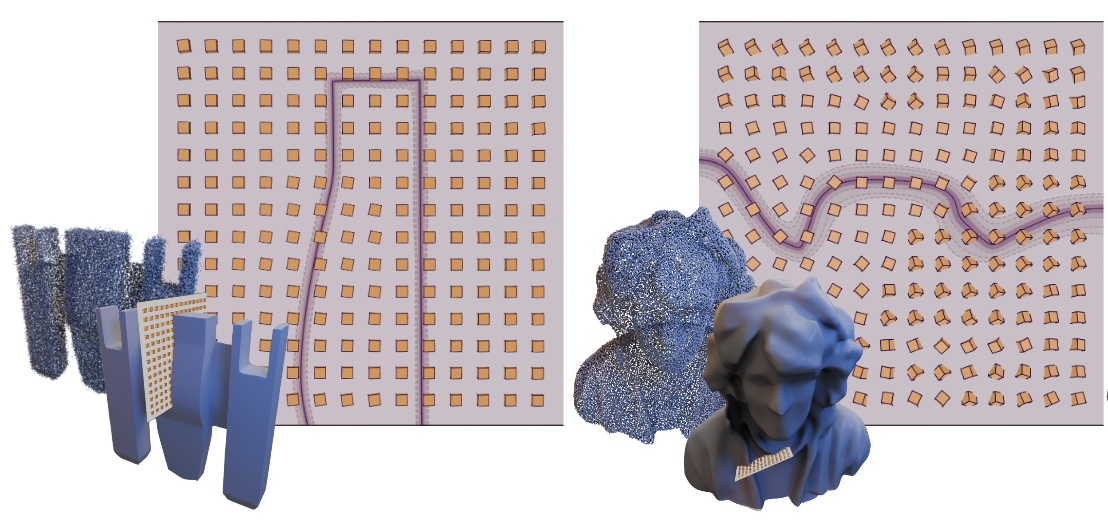

Neural Octahedral Field: Octahedral Prior for Simultaneous Smoothing and Sharp Edge Regularization

Ruichen Zheng, Tao Yu✝, Ruizhen Hu✝

SIGGRAPH Asia 2025 & ToG

ELGAR: Expressive Cello Performance Motion Generation for Audio Rendition

SIGGRAPH 2025

ImViD: Immersive Volumetric Videos for Enhanced VR Engagement

CVPR 2025 [Highlight]

V2V3D: View-to-View Denoised 3D Reconstruction for Light Field Microscopy

Jiayin Zhao, Zhenqi Fu, Tao Yu✝, Hui Qiao✝,

CVPR 2025

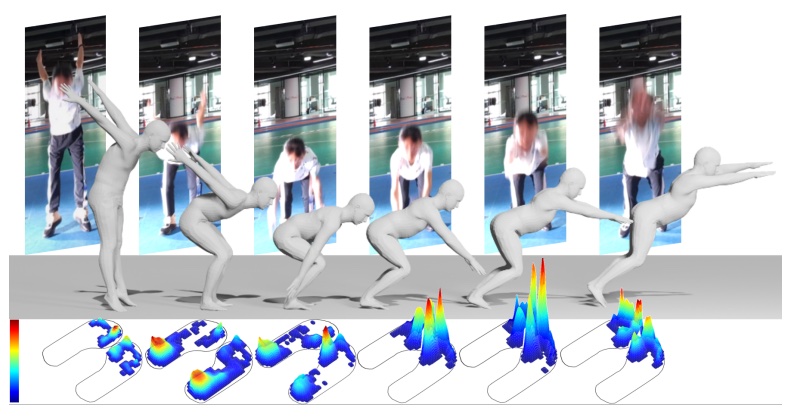

MotionPRO: Exploring the Role of Pressure in Human MoCap and Beyond

Shenghao Ren*, Yi Lu*, Jiayi Huang, Jiayi Zhao, He Zhang, Tao Yu, Qiu Shen✝, Xun Cao,

CVPR 2025 [Highlight]

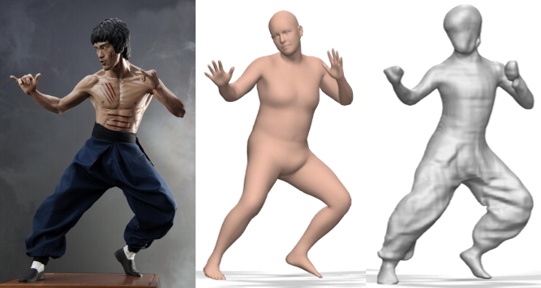

PSHuman: Photorealistic Single-image 3D Human Reconstruction using Cross-Scale Multiview Diffusion and Explicit Remeshing

CVPR 2025

Trust in Virtual Agents: Exploring the Role of Stylization and Voice

Yang Gao, Yangbin Dai, Guangtao Zhang, Honglei Guo, Fariba Mostajeran, Binge Zheng, Tao Yu✝

IEEE TVCG (IEEE VR 2025)

Neural Fluid Simulation on Geometric Surfaces

Haoxiang Wang, Tao Yu✝, Hui Qiao, Qionghai Dai,

ICLR 2025

Super-NeRF: View-consistent Detail Generation for NeRF Super-resolution

Yuqi Han, Tao Yu✝, Xiaohang Yu, Yuwang Wang, Qionghai Dai,

TVCG 2025

ImmersiveNeRF: Hybrid Radiance Fields for Unbounded Immersive Light Field Reconstruction

Xiaohang Yu, Haoxiang Wang, Yuqi Han, Lei Yang, Tao Yu✝, Qionghai Dai,

TVCG 2025

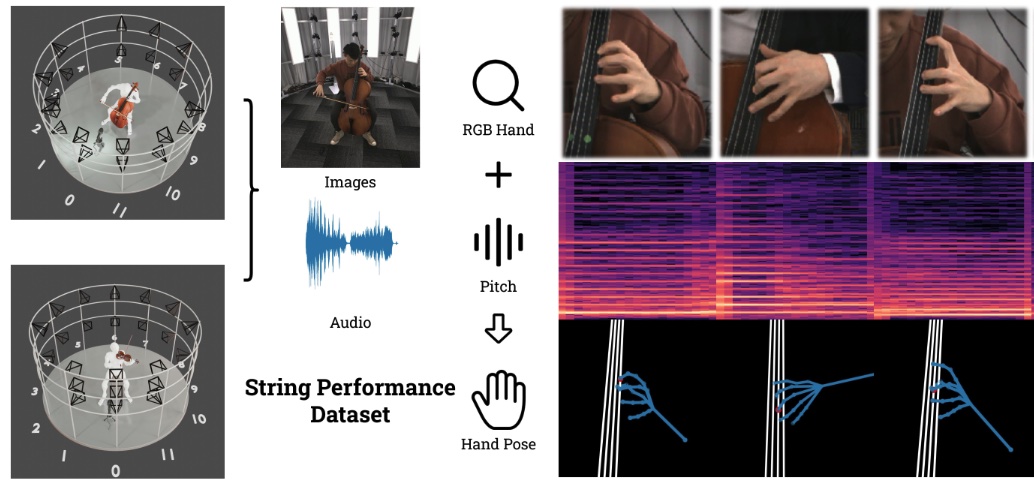

Audio Matters Too! Enhancing Markerless Motion Capture with Audio Signals for String Performance Capture

Yitong Jin*, Zhiping Qiu*, Yi Shi, Shuangpeng Sun, Chongwu Wang, Donghao Pan, Jiachen Zhao, Zhenghao Liang, Yuan Wang, Xiaobing Li, Feng Yu, Tao Yu✝, Qionghai Dai✝

ACM SIGGRAPH 2024 & TOG

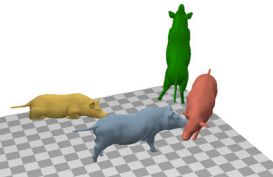

Three-dimensional surface motion capture of multiple freely moving pigs using MAMMAL

Liang An, Jilong Ren, Tao Yu, Tang Hai✝, Yichang Jia✝, Yebin Liu✝

Nature Communications

MMVP: A Multimodal MoCap Dataset with Vision and Pressure Sensors

He Zhang*, Shenghao Ren*, Haolei Yuan*, Jianhui Zhao, Fan Li, Shuangpeng Sun, Zhenghao Liang, Tao Yu✝, Qiu Shen✝, Xun Cao✝

IEEE CVPR 2024

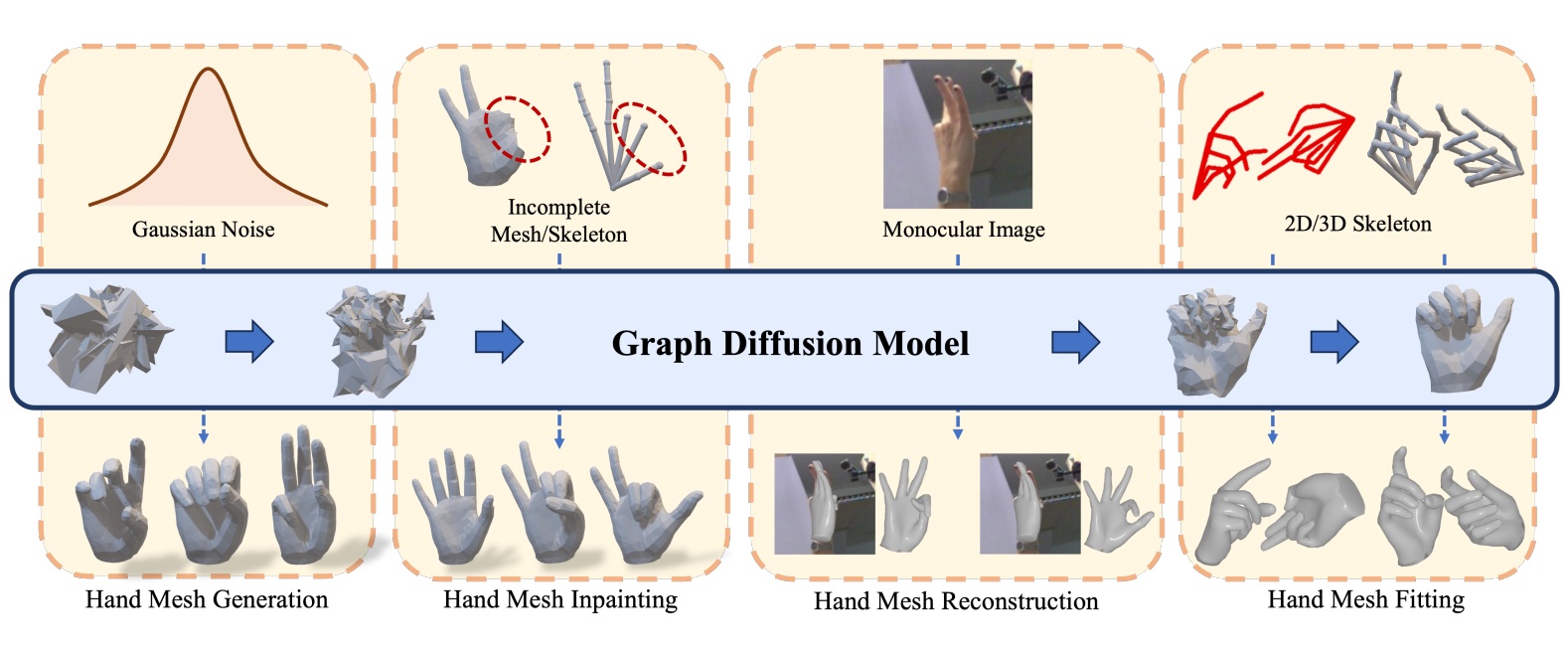

HHMR: Holistic Hand Mesh Recovery by Enhancing the Multimodal Controllability of Graph Diffusion Models

Mengcheng Li, Hongwen Zhang, Yuxiang Zhang, Ruizhi Shao, Tao Yu✝, Yebin Liu✝

IEEE CVPR 2024 (Highlight)

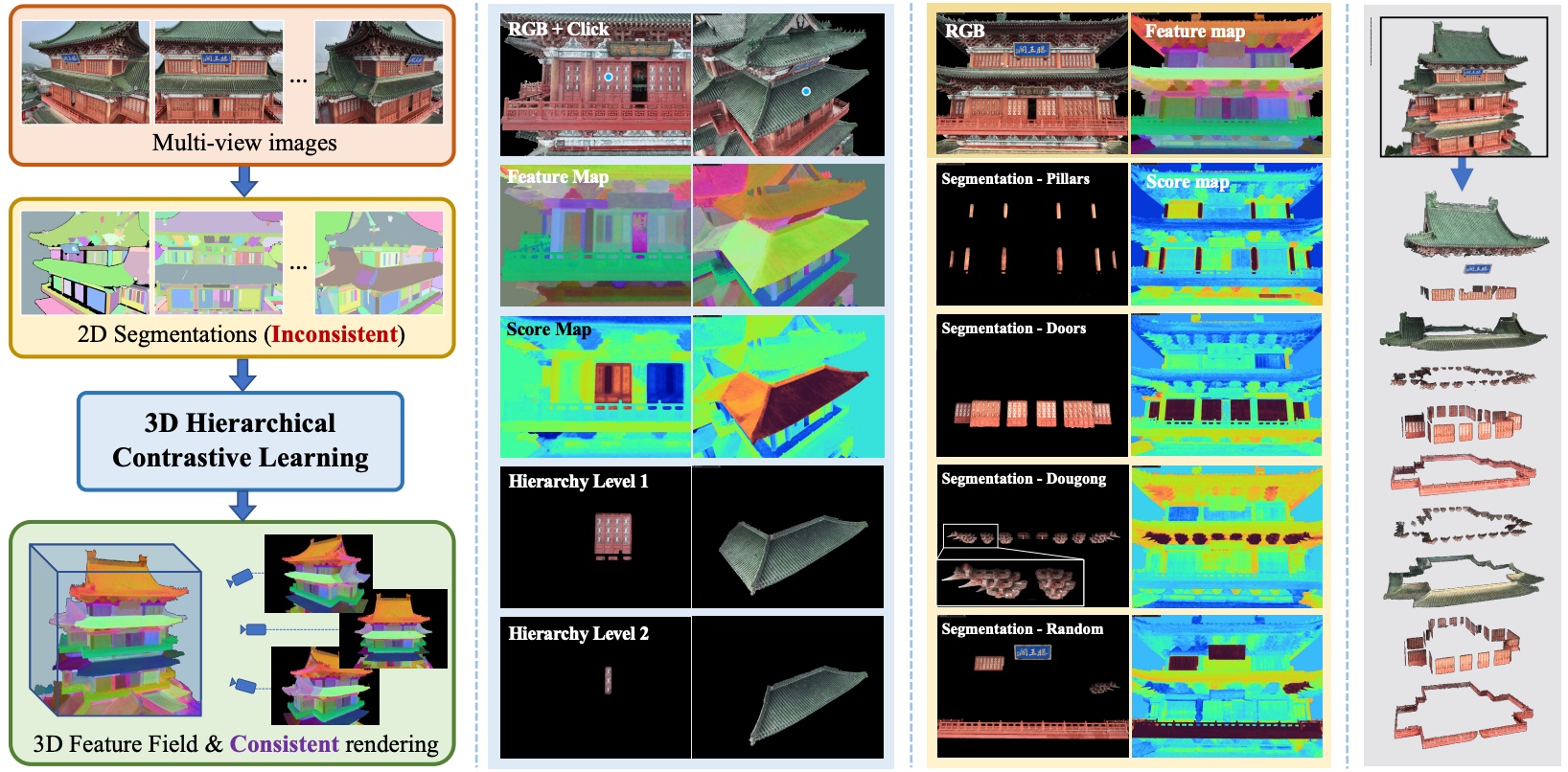

OmniSeg3D: Omniversal 3D Segmentation via Hierarchical Contrastive Learning

Haiyang Ying, Yixuan Yin, Jinzhi Zhang, Fan Wang, Tao Yu, Ruqi Huang, Lu Fang,

IEEE CVPR 2024

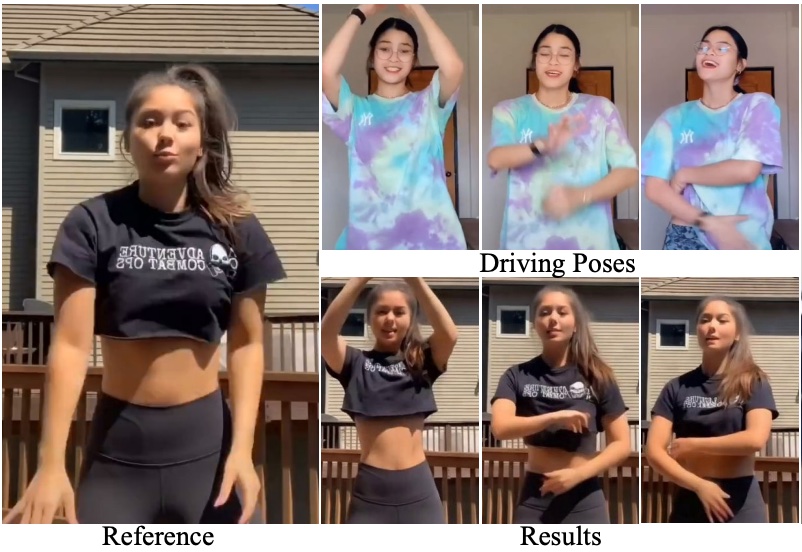

DiffPerformer: Iterative Learning of Consistent Latent Guidance for Diffusion-based Human Video Generation

Chenyang Wang, Zerong Zheng, Tao Yu, Xiaoqian Lv, Bineng Zhong, Shengping Zhang, Liqiang Nie

IEEE CVPR 2024

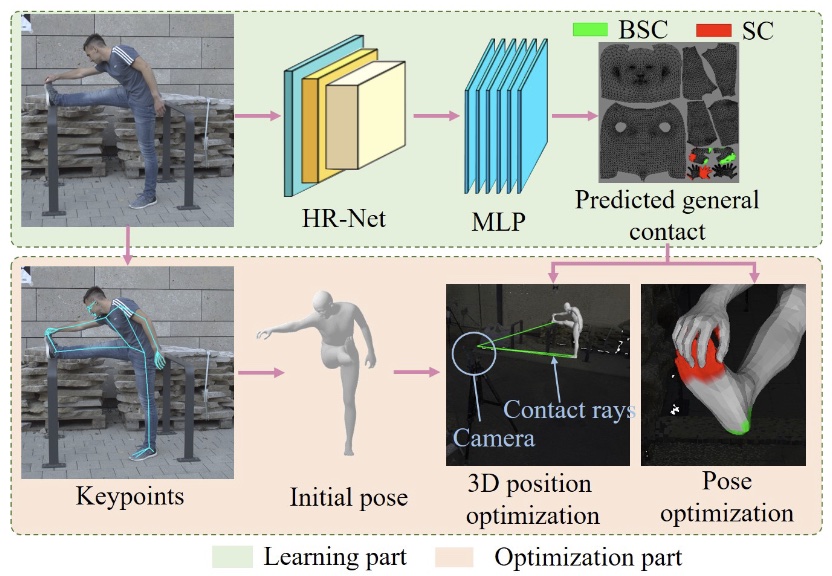

Human Pose Estimation with General Contact

He Zhang, Jianhui Zhao, Fan Li+, Yitian Wu, Chao Tan, Shuangpeng Sun, Yaohua Wu, You Li, Tao Yu+

Springer CVMJ

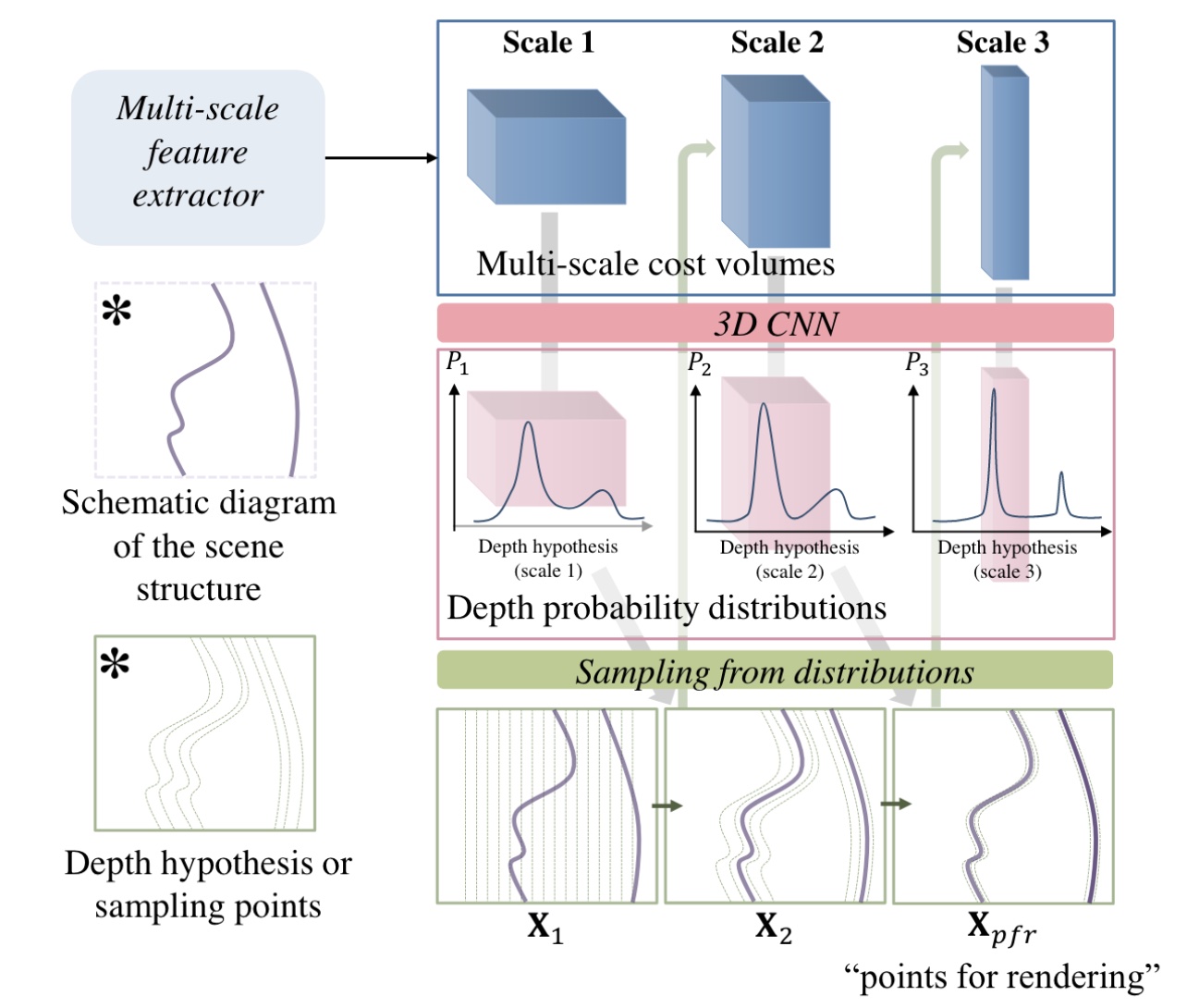

ProbIBR: Fast Image-Based Rendering with Learned Probability-Guided Sampling

Yuemei Zhou, Tao Yu, Zerong Zheng, Gaochang Wu, Guihua Zhao, Wenbo Jiang, Ying Fu, Yebin Liu

IEEE TVCG

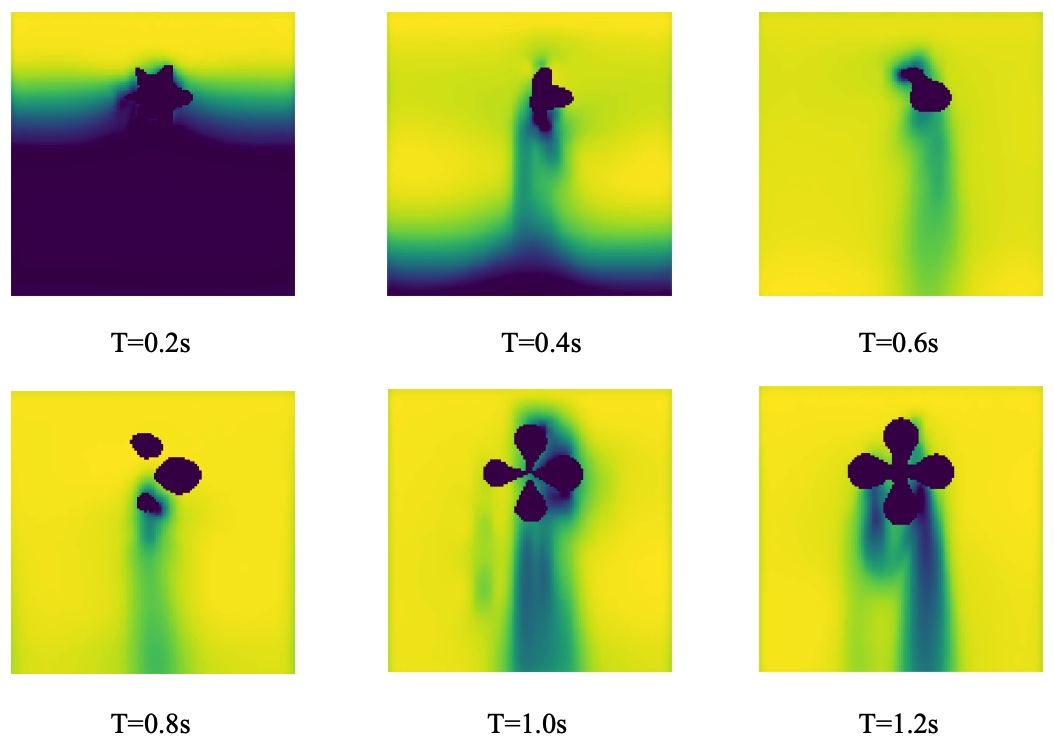

Neural Physical Simulation with Multi-resolution Hash Grid Encoding

Haoxiang Wang, Tao Yu✝, Tianwei Yang, Hui Qiao✝, Qionghai Dai

AAAI 2024 [Oral Presentation]

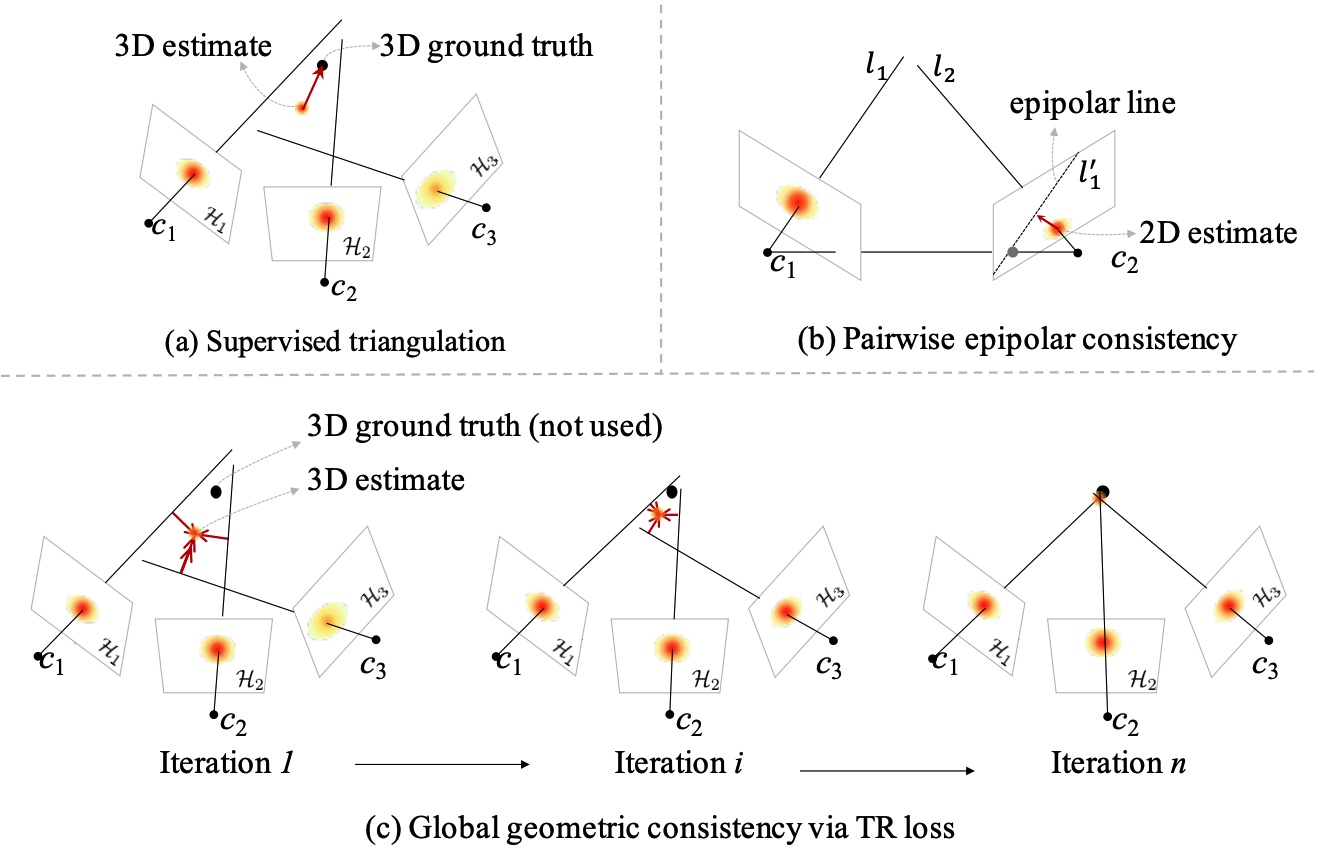

Triangulation Residual Loss for Data-efficient 3D Pose Estimation

Jiachen Zhao, Tao Yu✝, Liang An, Yipeng Huang, Fang Deng, Qionghai Dai✝

NeurIPS 2023

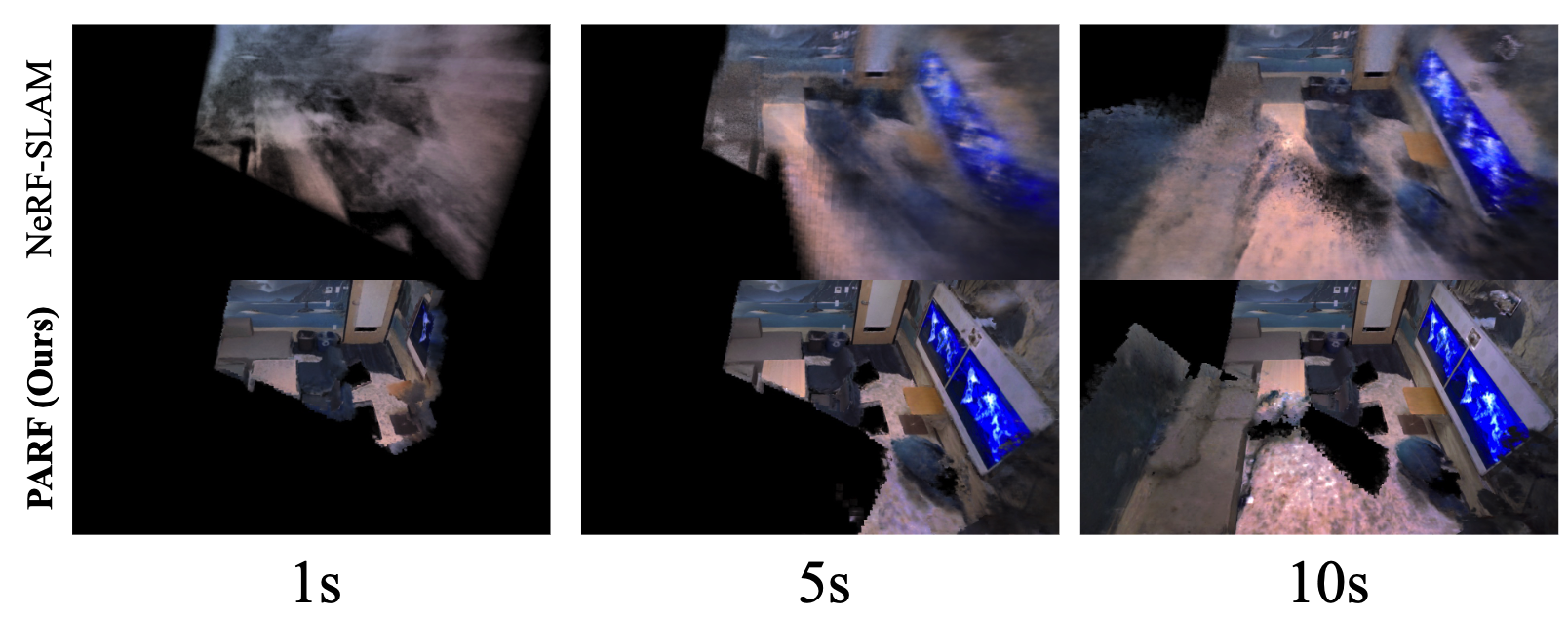

PARF: Primitive-Aware Radiance Fusion for Indoor Scene Novel View Synthesis

Haiyang Ying, Baowei Jiang, Jinzhi Zhang, Jun Zhang, Hongguang Xing, Di Xu, Tao Yu✝, Qionghai Dai, Lu Fang✝

ICCV 2023

OPAL: Occlusion Pattern Aware Loss for Unsupervised Light Field Disparity Estimation

Peng Li*, Jiayin Zhao*, Jingyao Wu, Chao Deng, Yuqi Han, Haoqian Wang✝, Tao Yu✝,

IEEE-TPAMI

StyleAvatar: Real-time Photo-realistic Neural Portrait Avatar from a Single Video

Lizhen Wang, Xiaochen Zhao, Jingxiang Sun, Yuxiang Zhang, Hongwen Zhang, Tao Yu✝, Yebin Liu✝

ACM SIGGRAPH 2023

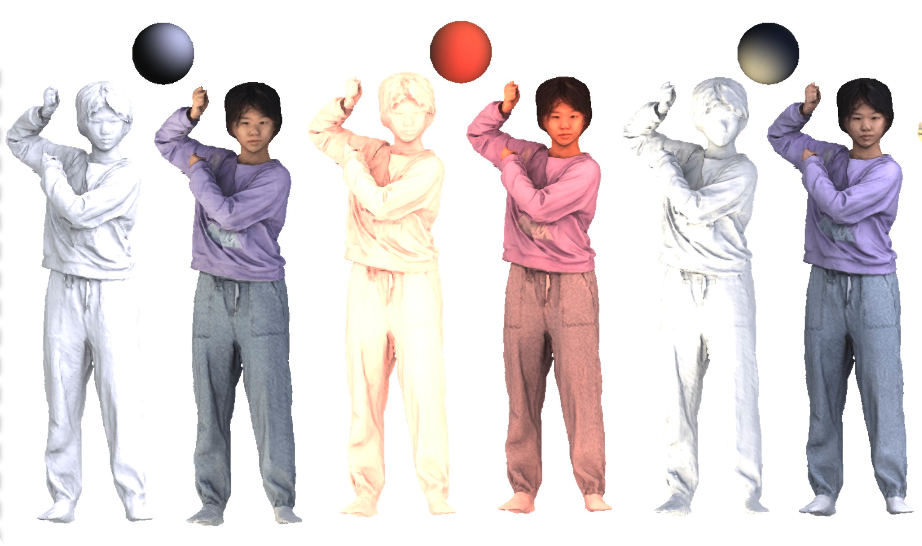

Learning Visibility Field for Detailed 3D Human Reconstruction and Relighting

Ruichen Zheng*, Peng Li*, Haoqian Wang, Tao Yu

IEEE CVPR 2023

Controllable Free Viewpoint Video Reconstruction Based on Neural Radiance Fields and Motion Graphs

He Zhang, Fan Li, Jianhui Zhao, Chao Tan, Dongming Shen, Yebin Liu, Tao Yu

IEEE TVCG

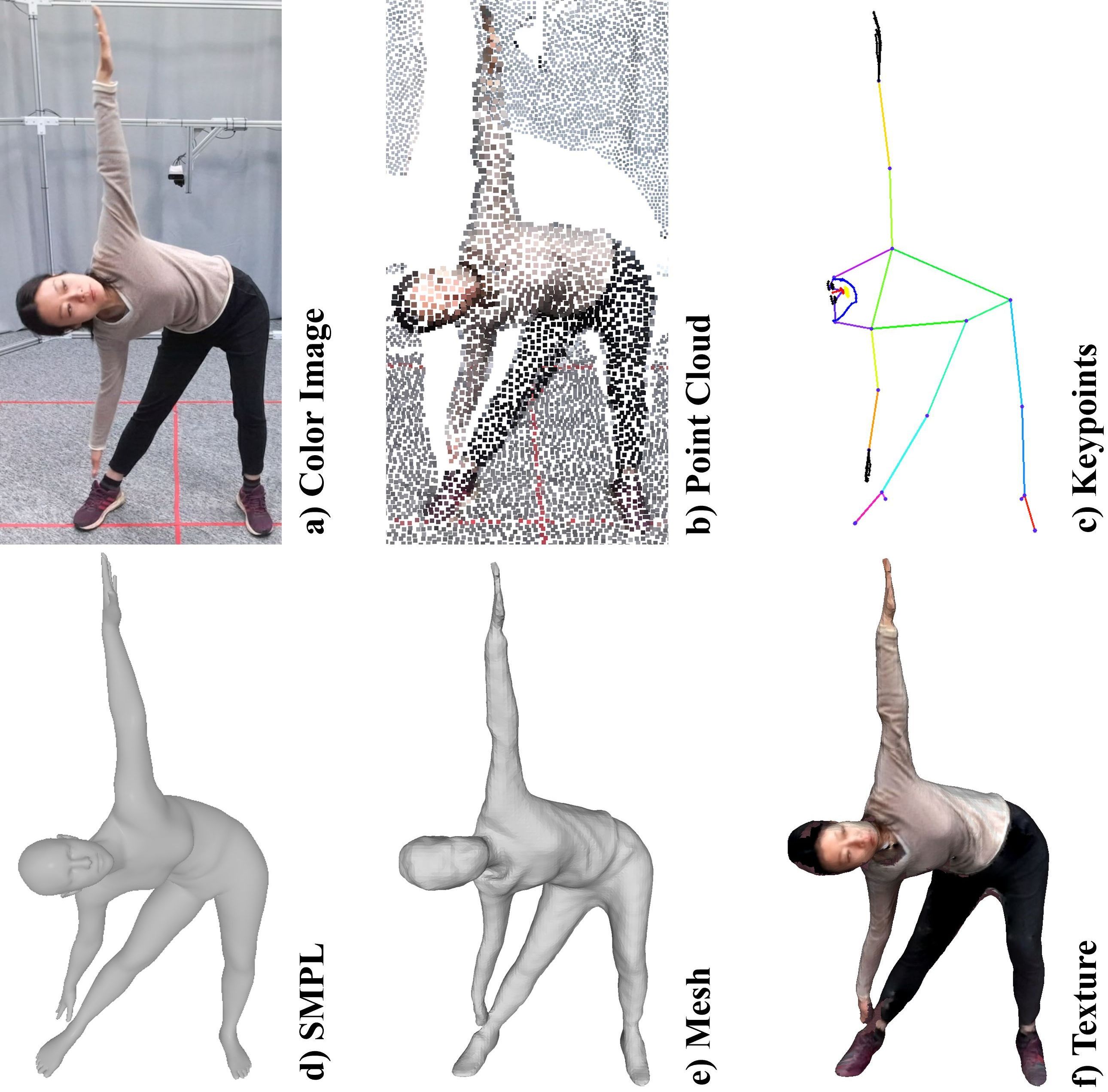

HuMMan: Multi-Modal 4D Human Dataset for Versatile Sensing and Modeling

Zhongang Cai*, Daxuan Ren*, Ailing Zeng*, Zhengyu Lin*, Tao Yu*, Wenjia Wang*, Xiangyu Fan, Yang Gao, Yifan Yu, Liang Pan, Fangzhou Hong, Mingyuan Zhang, Chen Change Loy, Lei Yang✝, Ziwei Liu✝

European Conference on Computer Vision (ECCV 2022 Oral Presentation)

Geometry-aware Single-image Full-body Human Relighting

Chaonan Ji, Tao Yu, Kaiwen Guo, Jingxin Liu, Yebin Liu

European Conference on Computer Vision (ECCV 2022)

GIMO: Gaze-Informed Human Motion Prediction in Context

Yang Zheng, Yanchao Yang, Kaichun Mo, Jiaman Li, Tao Yu, Yebin Liu, Karen Liu, Leonidas Guibas,

European Conference on Computer Vision (ECCV 2022)

HVTR: Hybrid Volumetric-Textural Rendering for Human Avatars

Tao Hu, Tao Yu, Zerong Zheng, He Zhang, Yebin Liu, Matthias Zwicker

International Conference on 3D Vision (3DV 2022)

Interacting Attention Graph for Single Image Two-Hand Reconstruction

Mengcheng Li, Liang An, Hongwen Zhang, Lianpeng Wu, Feng Chen, Tao Yu, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2022 Oral Presentation)

Structured Local Radiance Fields for Human Avatar Modeling

Zerong Zheng, Han Huang, Tao Yu, Hongwen Zhang, Yandong Guo, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2022)

DoubleField: Bridging the Neural Surface and Radiance Fields for High-fidelity Human Reconstruction and Rendering

Ruizhi Shao, Hongwen Zhang, He Zhang, Mingjia Chen, Yanpei Cao, Tao Yu, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2022)

FaceVerse: a Fine-grained and Detail-changeable 3D Neural Face Modelfrom a Hybrid Dataset

Lizhen Wang, Zhiyuan Chen, Tao Yu, Chenguang Ma, Liang Li, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2022)

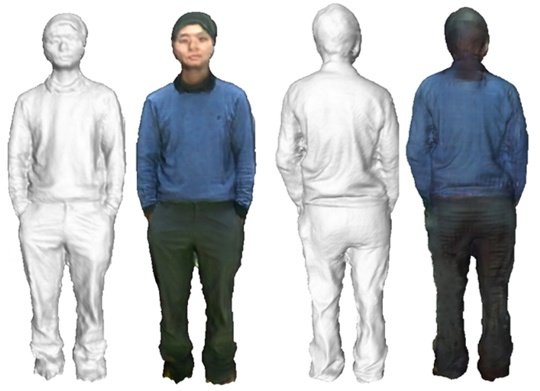

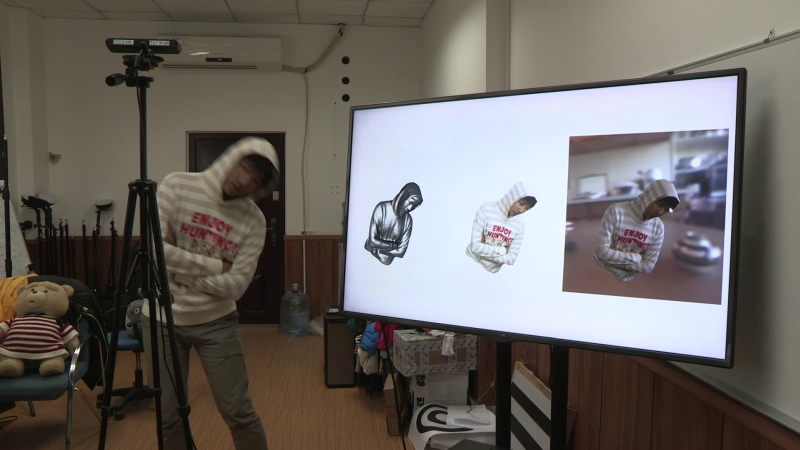

Robust and Accurate 3D Self-portraits in Seconds

Zhe Li*, Tao Yu*, Zerong Zheng, Yebin Liu

IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI 2022)

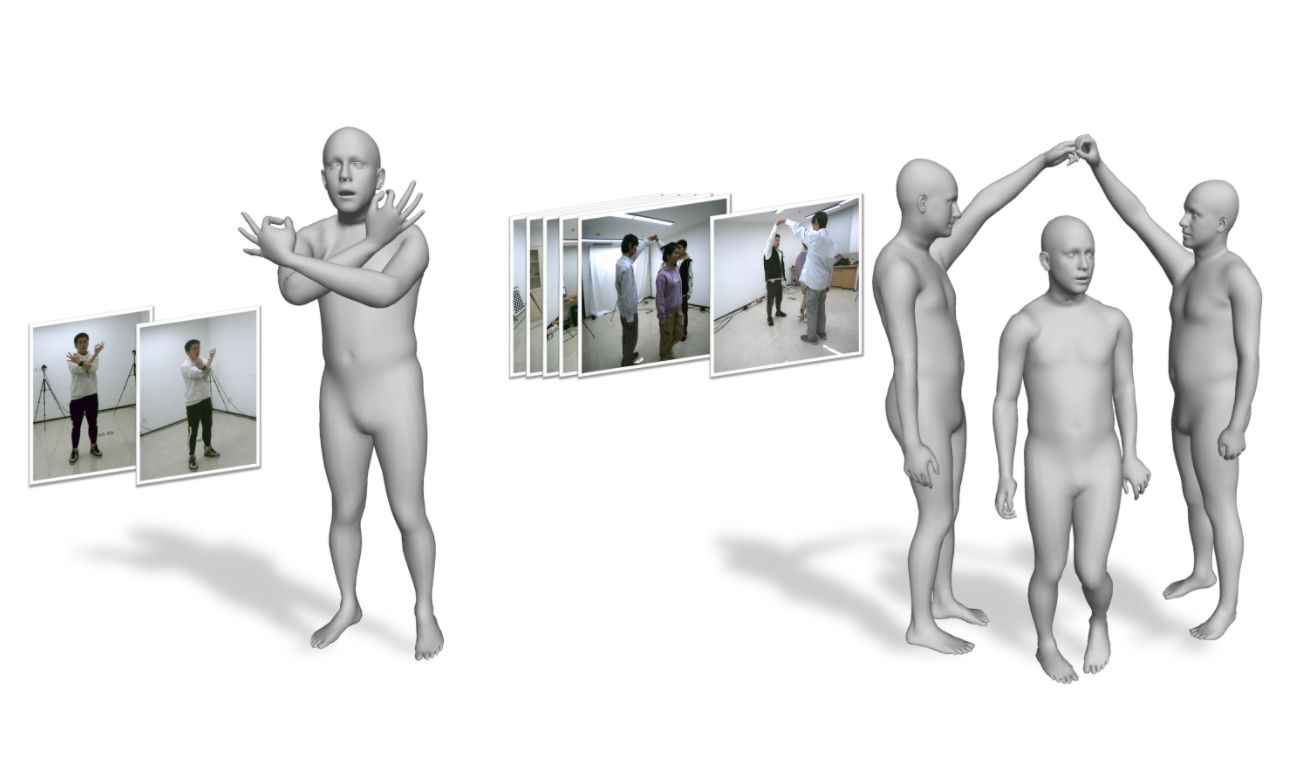

Lightweight Multi-person Total Motion Capture Using Sparse Multi-view Cameras

Yuxiang Zhang, Zhe Li, Liang An, Mengcheng Li, Tao Yu✝, Yebin Liu✝

IEEE International Conference on Computer Vision (ICCV 2021)

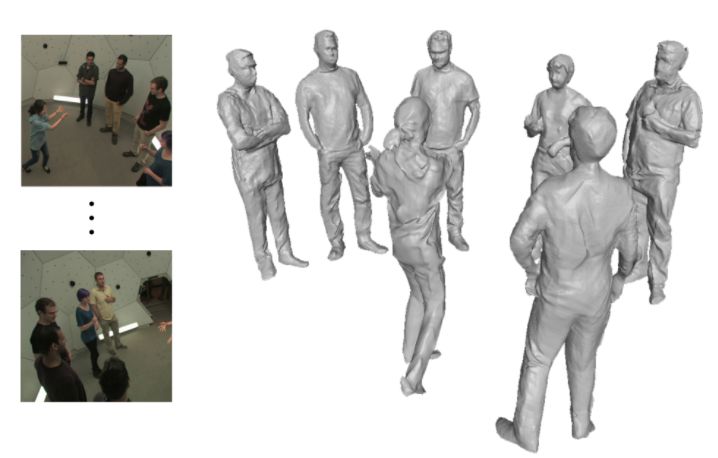

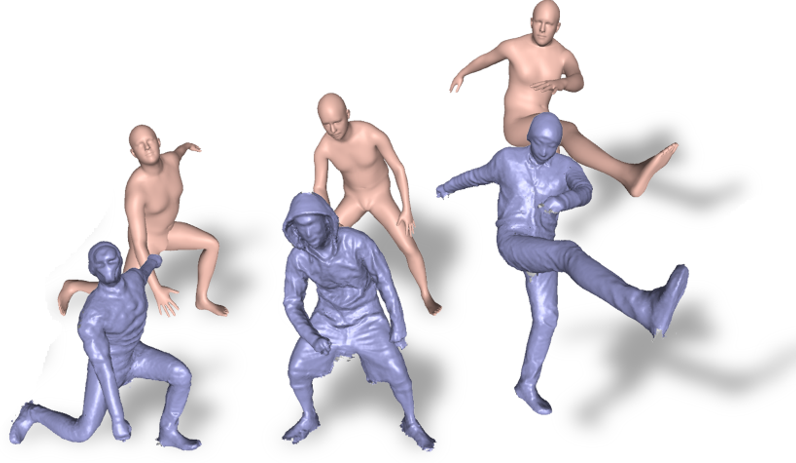

DeepMultiCap: Performance Capture of Multiple Characters Using Sparse Multiview Cameras

Yang Zheng*, Ruizhi Shao*, Yuxiang Zhang, Tao Yu, Zerong Zheng, Qionghai Dai, Yebin Liu

IEEE International Conference on Computer Vision (ICCV 2021)

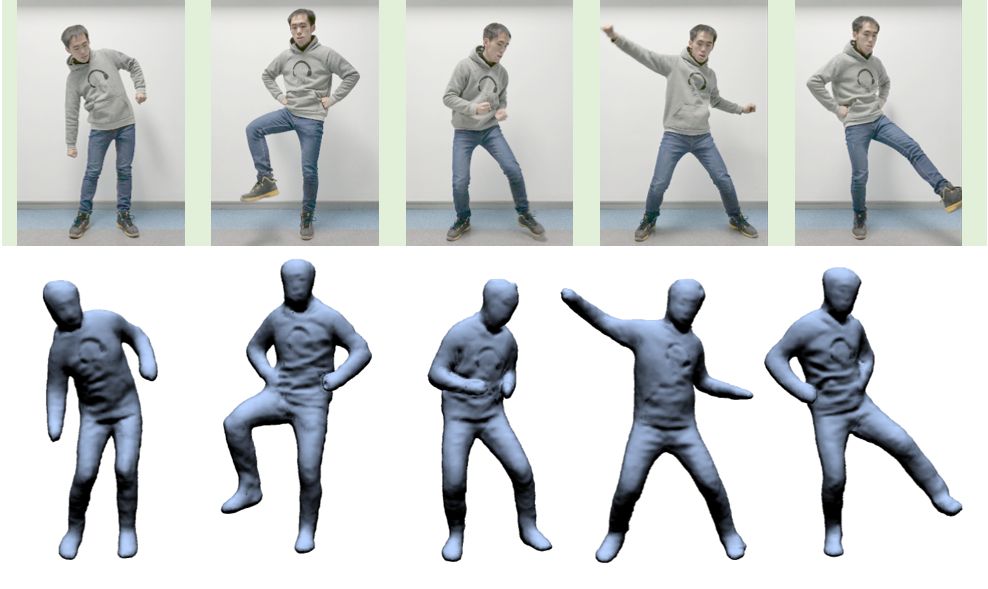

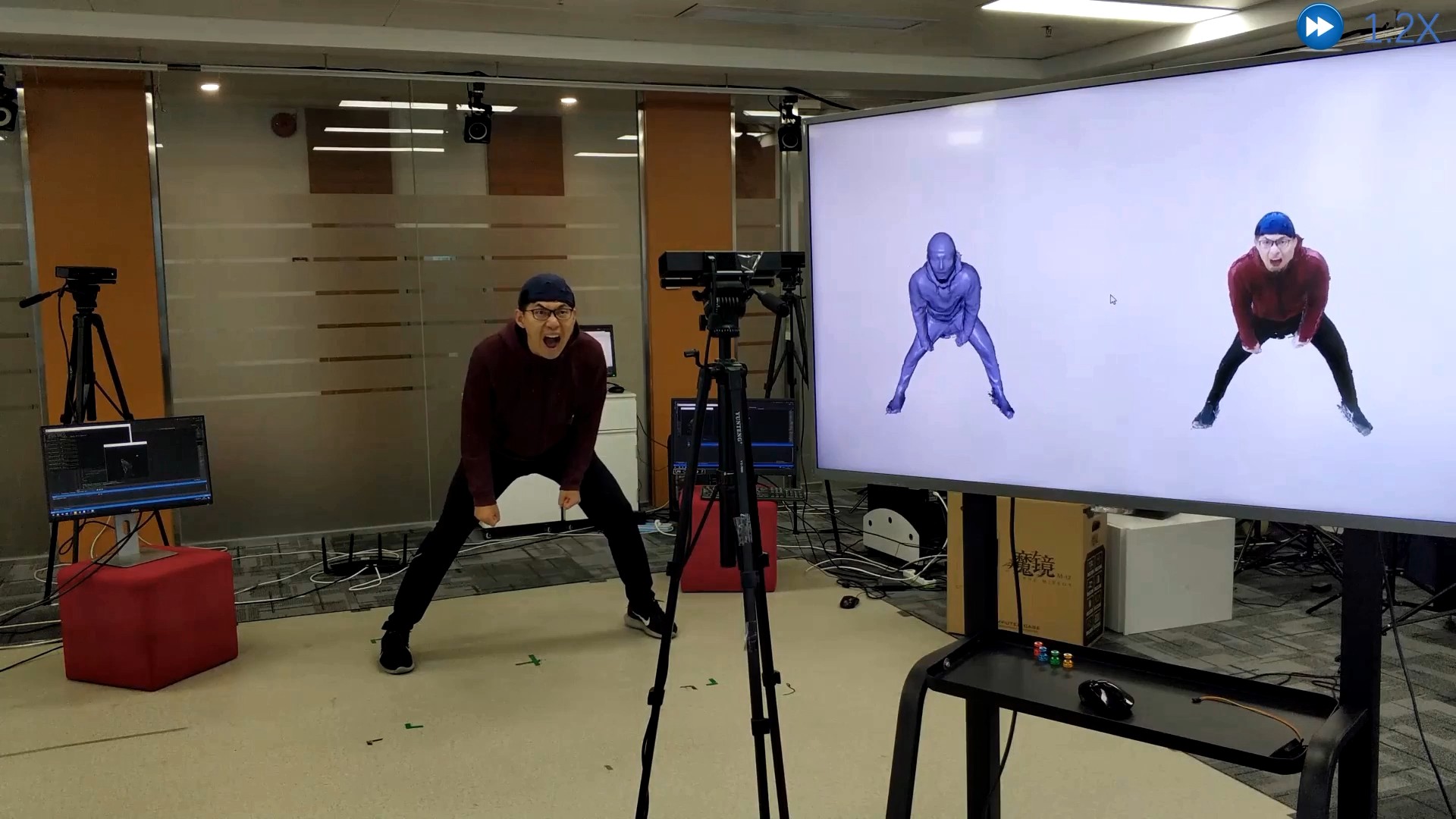

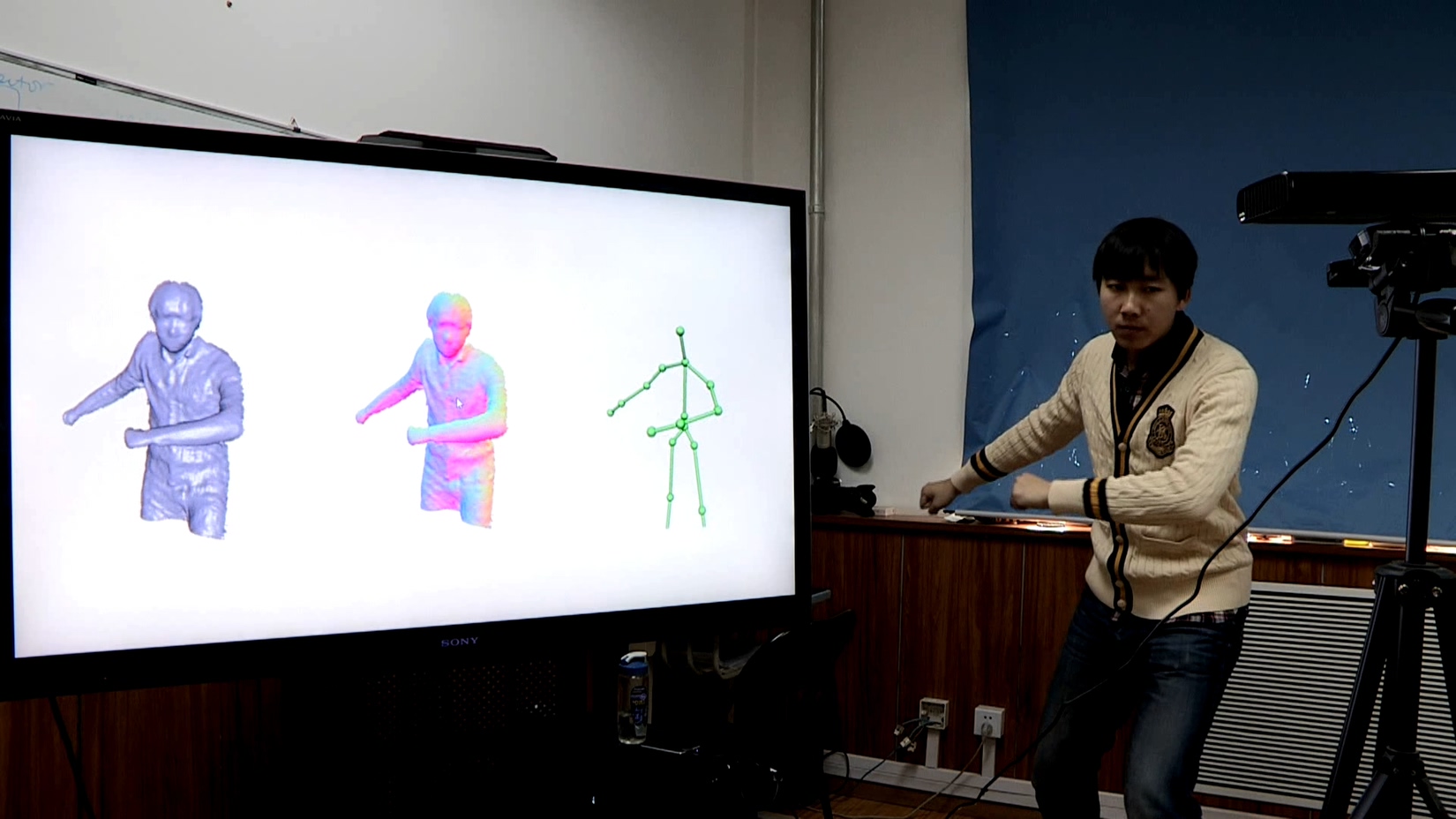

Function4D: Real-time Human Volumetric Capture from Very Sparse Consumer RGBD Sensors

Tao Yu, Zerong Zheng, Kaiwen Guo, Pengpeng Liu, Qionghai Dai, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2021 Oral Presentation)

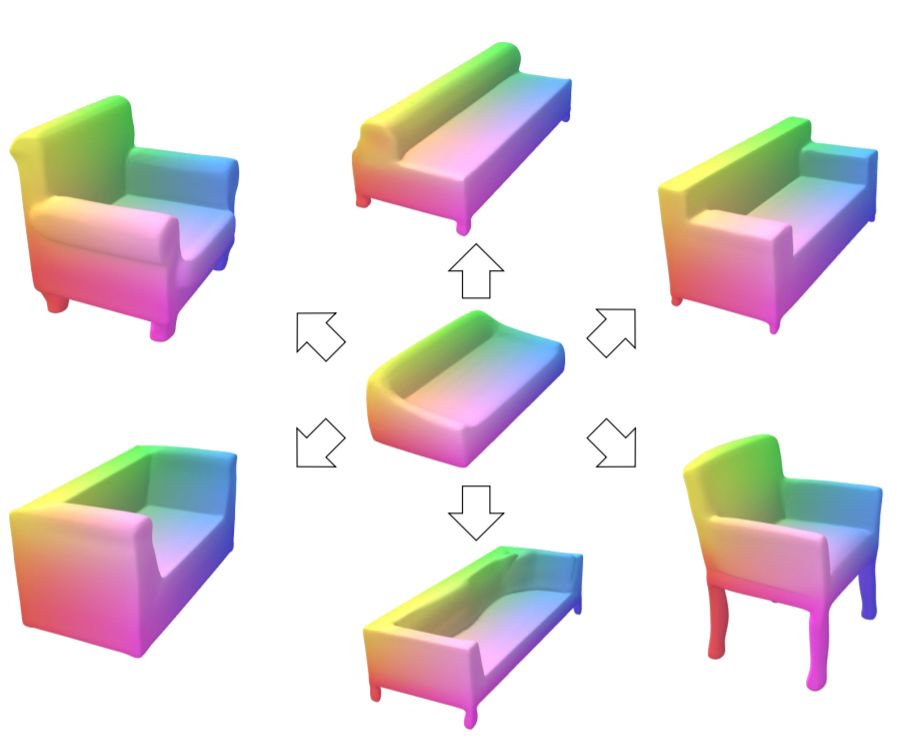

Deep Implicit Templates for 3D Shape Representations

Zerong Zheng, Tao Yu, Qionghai Dai, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2021 Oral Presentation)

POSEFusion: Pose-guided Selective Fusion for Single-view Human Volumetric Capture

Zhe Li, Tao Yu, Zerong Zheng, Kaiwen Guo, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2021 Oral Presentation)

PaMIR: Parametric Model-Conditioned Implicit Representation for Image-based Human Reconstruction

Zerong Zheng, Tao Yu, Yebin Liu, Qionghai Dai

IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI 2020)

MulayCap: Multi-layer Human Performance Capture Using A Monocular Video Camera

Zhaoqi Su, Weilin Wan, Tao Yu, Lingjie Liu, Lu Fang, Wenping Wang, Yebin Liu

IEEE Transactions on Visualization and Computer Graphics (TVCG 2020)

RobustFusion: Human Volumetric Capture with Data-driven Visual Cues using a RGBD Camera

Zhuo Su, Xu Lan, Zerong Zheng, Tao Yu, Yebin Liu, Lu Fang

European Conference on Computer Vision (ECCV 2020 Spotlight Presentation)

Robust 3D self-portraits in seconds

Zhe Li, Tao Yu, Zerong Zheng, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2020 Oral Presentation)

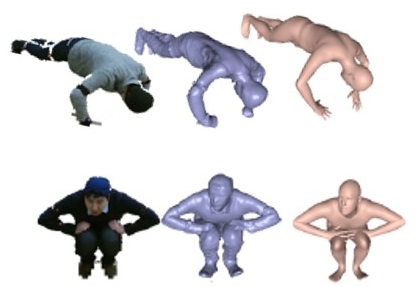

DeepHuman: 3D Human Reconstruction from a Single Image

Zerong Zheng, Tao Yu, Yixuan Wei, Qionghai Dai, Yebin Liu

IEEE International Conference on Computer Vision (ICCV 2019 Oral Presentation)

SimulCap: Single-View Human Performance Capture with Cloth Simulation

Tao Yu, Zerong Zheng, Yuan Zhong, Jianhui Zhao, Qionghai Dai, Gerard Pons-Moll, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2019)

HybridFusion: Real-time Performance Capture Using a Single Depth Sensor and Sparse IMUs

Zerong Zheng, Tao Yu, Hao Li, Kaiwen Guo, Qionghai Dai, Lu Fang, Yebin Liu

European Conference on Computer Vision (ECCV 2018)

DoubleFusion: Real-time Capture of Human Performances with Inner Body Shapes from a Single Depth Sensor

Tao Yu, Zerong Zheng, Kaiwen Guo, Jianhui Zhao, Qionghai Dai, Hao Li, Gerard Pons-Moll, Yebin Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2018 Oral Presentation)

[Project] [Arxiv] [Paper] [Youtube] [Video] [Oral Presentation (Youtube)] [Software] [TechCrunch] [Sohu]

BodyFusion: Real-time Capture of Human Motion and Surface Geometry Using a Single Depth Camera

Tao Yu, Kaiwen Guo, Feng Xu, Yuan Dong, Zhaoqi Su, Jianhui Zhao, Jianguo Li, Qionghai Dai, Yebin Liu

IEEE International Conference on Computer Vision (ICCV 2017)

Real-time Geometry, Albedo and Motion Reconstruction Using a Single RGBD Camera

Kaiwen Guo, Feng Xu, Tao Yu, Xiaoyang Liu, Qionghai Dai, Yebin Liu

ACM Transactions on Graphics (Present in SIGGRAPH 2017)