IEEE/CVF CVPR 2023

Learning Visibility Field for Detailed 3D Human Reconstruction and Relighting

Ruichen Zheng*,1,2, Peng Li*,1, Haoqian Wang1, Tao Yu11Tsinghua University, China, 2Weilan Tech, Beijing, China

Abstract

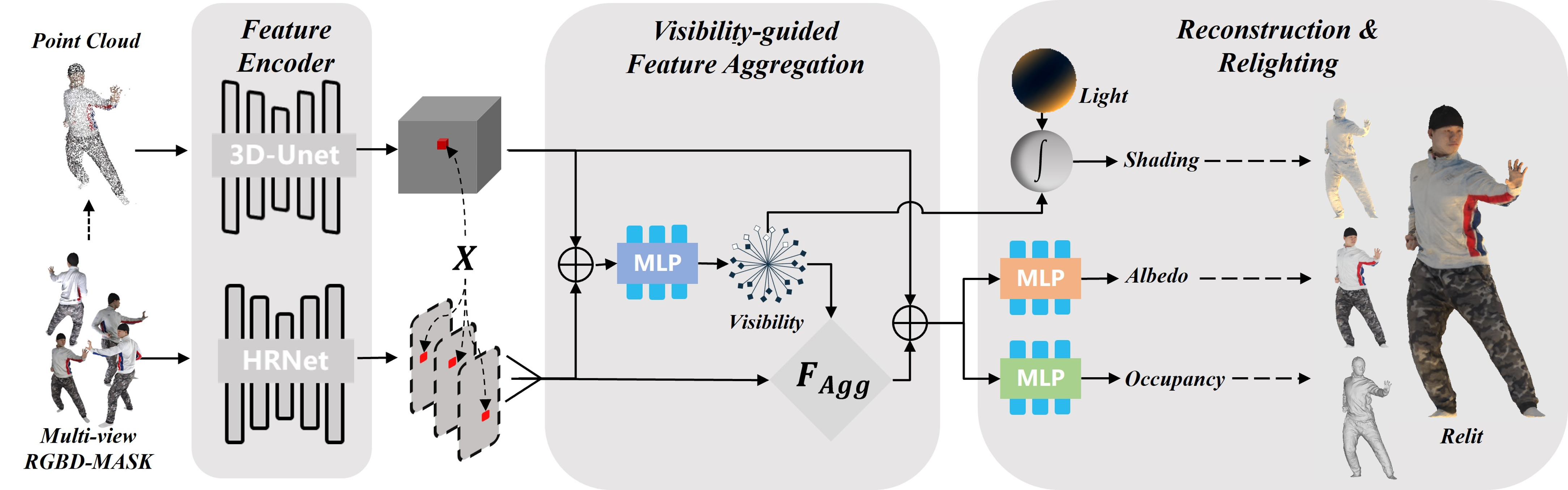

Detailed 3D reconstruction and photo-realistic relighting of digital humans are essential for various applications. To this end, we propose a novel sparse-view 3d human reconstruction framework that closely incorporates the occupancy field and albedo field with an additional visibility field–it not only resolves occlusion ambiguity in multi-view feature aggregation, but can also be used to evaluate light attenuation for self-shadowed relighting. To enhance its training viability and efficiency, we discretize visibility onto a fixed set of sample directions and supply it with coupled geometric 3D depth feature and local 2D image feature. We further propose a novel rendering-inspired loss, namely TransferLoss, to implicitly enforce the alignment between visibility and occupancy field, enabling end-to-end joint training. Results and extensive experiments demonstrate the effectiveness of the proposed method, as it surpasses state-of-the-art in terms of reconstruction accuracy while achieving comparably accurate relighting to ray-traced ground truth.

Video

Poster

Results

BibTeX

@InProceedings{Zheng_2023_CVPR,

author = {Zheng, Ruichen and Li, Peng and Wang, Haoqian and Yu, Tao},

title = {Learning Visibility Field for Detailed 3D Human Reconstruction and Relighting},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2023},

pages = {216-226}

}